If you are building a high-scale .NET application, you likely have a CacheService class somewhere in your project that looks exactly like this:

- Check

IMemoryCache(L1). - If missing, check

IDistributedCache(Redis/L2). - If missing, lock a semaphore (to prevent stampedes).

- Fetch data from the Database.

- Serialize it (probably using JSON).

- Save to Redis.

- Save to Memory.

We have all written this code. We have all debugged the race conditions in it. We have all argued about whether to use System.Text.Json or MessagePack.

With .NET 10, it is time to delete that class.

Microsoft has officially flagged this pattern as “legacy” with the introduction of HybridCache (available via Microsoft.Extensions.Caching.Hybrid). It isn’t just a wrapper; it’s a complete rethink of how caching works in .NET.

The Problem with IDistributedCache

The old abstraction (IDistributedCache) lied to us. It treated byte[] as the only currency. This forced every read/write to incur a Serialization Cost.

Even if you were fetching from local memory (L1), if you were using anIDistributedCache wrapper, you were often serializing/deserializing unnecessarily.

Worse, standard caching implementations rarely handle the “Thundering Herd” (Cache Stampede) problem out of the box. If a popular cache key expires while you have 10,000 requests per second, all 10,000 requests hit your database simultaneously.

Enter HybridCache

HybridCache is a new abstract class that unifies L1 (In-Process) and L2 (Out-of-Process) caching into a single API with built-in stampede protection.

Here is why it changes the architecture game:

1. Stampede Protection is Default

HybridCache guarantees that for a given key, only one concurrent caller executes the factory method (the database fetch). All other callers wait for the result. You don't need SemaphoreSlim anymore. It handles the coordination for you, even across distributed instances (if configured correctly).

2. “Magic” Serialization

This is the “Deep Tech” part your seniors will love. HybridCache doesn't just blindly serialize to JSON. It is smart enough to store immutable objects by reference in the L1 (Memory) cache.

- Reads from L1: Zero allocation. No deserialization. Just a reference copy.

- Reads from L2 (Redis): Only then does it deserialize.

This creates a massive performance jump for hot-path data.

The Code: Old vs. New

Let’s look at the cleanup.

The Old Way (Painful):

public async Task<UserDto> GetUserAsync(int id)

{

string key = $"user:{id}";

// 1. Try L1

if (_memoryCache.TryGetValue(key, out UserDto? user))

return user;

// 2. Try L2 (Redis)

var bytes = await _distCache.GetAsync(key);

if (bytes != null)

{

user = JsonSerializer.Deserialize<UserDto>(bytes);

_memoryCache.Set(key, user); // Backfill L1

return user;

}

// 3. Fetch DB (Danger: No Stampede Protection here!)

user = await _repo.GetUser(id);

// 4. Save L2 and L1

await _distCache.SetAsync(key, JsonSerializer.SerializeToUtf8Bytes(user));

_memoryCache.Set(key, user);

return user;

}

The New Way (.NET 10):

public async Task<UserDto> GetUserAsync(int id)

{

// Handles L1, L2, Serialization, and Stampede Protection

return await _hybridCache.GetOrCreateAsync(

key: $"user:{id}",

factory: async cancel => await _repo.GetUser(id)

);

}

Why You Should Switch (The “Senior” Argument)

If you are leading a team, the argument for HybridCache isn't just "less code." It is correctness.

Most custom caching implementations I review are subtly broken. They swallow errors during Redis outages. They serialize DateTime poorly. They leak connections. HybridCache standardizes this behavior.

It also supports Tagging (evicting groups of keys) out of the box — a feature we have been begging for since .NET Core 1.0.

Conclusion

We often cling to our custom helper classes because we feel we have “more control.” But in 2025, writing your own caching orchestration is like writing your own JSON parser: You probably shouldn’t.

HybridCache is simpler, faster, and safer. Delete your CacheService. Use the standard.

POSTS ACROSS THE NETWORK

The Technology Reshaping UK Medical Cannabis Services

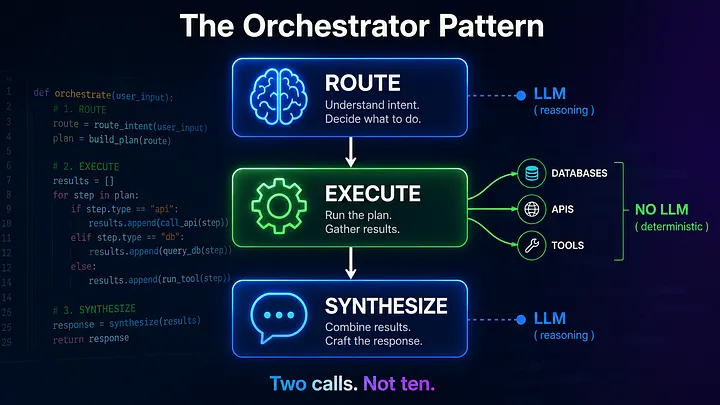

The Compounding Problem in Agentic AI Era

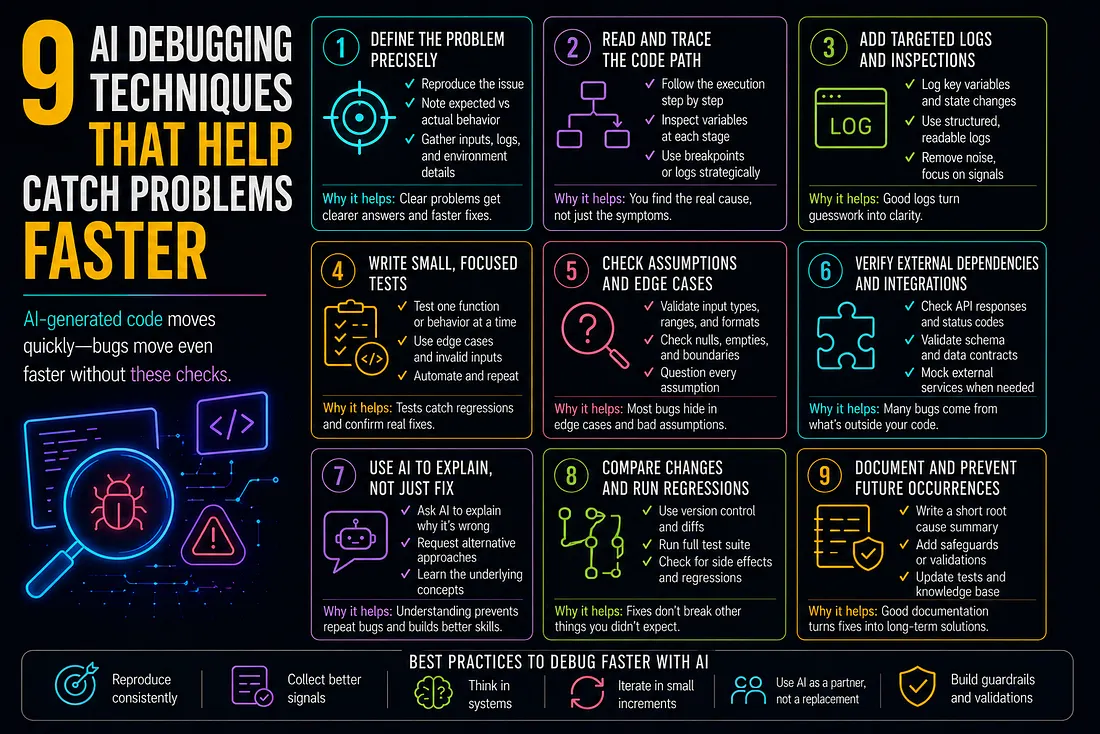

9 AI Debugging Techniques That Help Catch Problems Faster

How to Add SMS to Marketo Smart Campaigns Without Breaking Your Workflow

Best MCP Server for SEO in 2026: Guide for GEO, AEO, and SERM Experts