I Enabled Angular’s MCP Server and Now My AI Stopped Living in 2022

Cursor generated an NgModule last month.

Not on a legacy project. On a greenfield Angular 21 app I’d started two weeks earlier. I’d asked for a simple component and it gave me a full module declaration wrapped around it, declarations array and all, like it was 2021 and standalone components hadn't happened yet.

I fixed it in fifteen seconds and moved on. But I kept thinking about it. Because it wasn’t the first time. It wasn’t even the fifth time. Every few days something like this would show up. Constructor injection when I’d told the AI to use inject(). *ngIf in templates I'd already migrated to @if. The AI would watch me fix the same things and then generate them wrong again on the next component.

The problem isn’t that AI tools are bad at Angular. It’s that the Angular they know is two years old. The models were trained on data from when NgModules were still the default, when inject() was new enough that most tutorials still showed constructor injection, when *ngIf was everywhere because @if didn't exist yet. They learned that Angular and remembered it. Then Angular changed completely and the models didn't.

So I started looking at the Angular CLI MCP server, which shipped stable in Angular 21 last November.

MCP stands for Model Context Protocol. I’m not going to explain it from first principles because honestly the protocol details don’t matter much for how I’m using it. What matters is this: instead of the AI in your IDE drawing from stale training data every time you ask an Angular question, it can call live tools. The Angular CLI MCP server exposes tools that let the AI query real angular.dev documentation, read your actual angular.json, pull current best practices directly from the Angular team. It's not magic. It's the AI checking instead of guessing.

Setting it up for VS Code is a .vscode/mcp.json file at your project root:

{

"servers": {

"angular-cli": {

"command": "npx",

"args": ["-y", "@angular/cli", "mcp"]

}

}

}

The -y flag is not optional in practice. Without it, npm can pause mid-process to ask for confirmation. Nothing breaks visibly. The server just silently stops responding and you spend time wondering if you configured something wrong. I lost maybe twenty minutes to that before I found it in someone else's setup notes.

For Cursor, same config, .cursor/mcp.json instead of .vscode/. JetBrains has its own menu under the AI Assistant settings. All of them end up at the same ng mcp command.

The NgModule problem went away the next day. I asked for a component and got:

@Component({

selector: 'app-user-profile',

standalone: true,

imports: [CommonModule],

templateUrl: './user-profile.component.html'

})

export class UserProfileComponent { }

No module. standalone: true without me asking for it. The list_projects tool had read my angular.json and the AI knew what kind of project it was dealing with before it wrote a single line.

The constructor injection thing followed. I’d been correcting this one for months:

// what I kept getting before

constructor(private http: HttpClient, private router: Router) { }

// what I get now

private http = inject(HttpClient);

private router = inject(Router);

inject() isn't new. It's been around since Angular 14. But the training data for most models skews toward the older pattern because that's what existed in most of the Angular code on the internet when they were trained. The get_best_practices tool pulls the current Angular team recommendations, and inject-based DI is in there explicitly. So the AI now knows.

The control flow syntax cleaned up too. @if and @for instead of *ngIf and *ngFor. Again, not groundbreaking. But these were things I was correcting in almost every AI-generated template before, and now I'm not.

I also tried the modernize experimental tool, which you have to enable separately:

{

"servers": {

"angular-cli": {

"command": "npx",

"args": [

"-y", "@angular/cli", "mcp",

"--experimental-tool", "modernize"

]

}

}

}

I had a service using BehaviorSubject for state with RxJS operators throughout. Standard pre-signal Angular service, nothing exotic. I asked the agent to modernize it. It called get_best_practices first, then used modernize to figure out which schematics to run, then gave me the exact CLI commands:

ng generate @angular/core:inject

ng generate @angular/core:signal-input-migration

Then it converted the BehaviorSubject to a signal and cleaned up the subscriptions. I reviewed everything before applying any of it. But the starting point was code that looked like it was written this year, not code that compiled but felt like a relic.

I tried the same thing on a more complex service later. One with custom RxJS operators and some non-obvious reactive chains. It got confused. Ran the schematics fine, then added markForCheck() calls in places where a signal update would have been cleaner. Nothing broken. Just not the output I'd have produced by hand. I wouldn't use modernize on anything with complicated reactive logic. For straightforward services it's actually good.

Here’s where it still doesn’t help: anything outside Angular’s own docs.

I asked for a component using the Angular Material 19 dialog service. Got the old MatDialog.open() pattern, not the updated API. The MCP server covers angular.dev. It doesn't cover Angular Material's docs, NgRx, PrimeNG, or any other library in your stack. For those the AI is still on its own with training data.

Same problem with team conventions. The list_projects tool reads angular.json, so it knows your component prefix, your styling format, whether you're using standalone. It doesn't know that your team puts all state in a store/ folder, or that you have a base service class everyone extends, or that you've decided not to use the Angular CDK even though it's installed. That context doesn't live anywhere the MCP server can reach. You still have to explain it.

One more thing that tripped me up: the MCP tools only fire when the AI is in agent mode. Regular chat still uses training data only. I forgot this more than once in the first week and wondered why the NgModule problem was back, then realized I’d asked in the wrong mode.

I’ve been running this setup for a few weeks now. The day-to-day difference is smaller than the pitch makes it sound, but it’s real. The Angular framework code the AI generates is mostly modern now. I’m not fixing the same structural mistakes on every component. That’s worth something, especially if you’re reviewing AI-generated code regularly.

The experimental onpush_zoneless_migration tool I haven't used seriously yet. My project's too large to run an experimental migration tool on without more confidence in it. I tested it on a single component and it correctly flagged a ChangeDetectorRef.detectChanges() call that wouldn't survive zoneless. That was accurate. Whether it stays accurate across a whole codebase I don't know.

What I do know is that setting it up took ten minutes and the NgModule generation problem, which I had been quietly fixing for months, is gone. That alone made it worth it.

POSTS ACROSS THE NETWORK

Why Tech Projects Fail and How Embedded Systems Experts Can Prevent It

InnoDB Performance Tuning – 11 Critical InnoDB Variables to Optimize Your MySQL Database

Fun Word Memory Techniques for English to Try

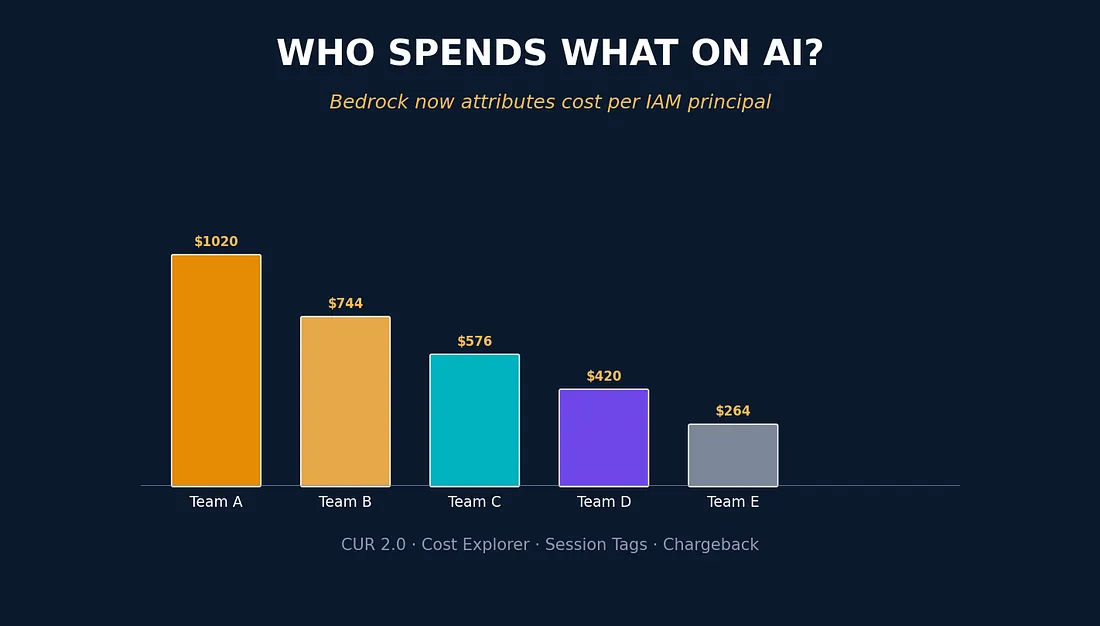

FinOps Meets IAM: How AWS Finally Delivered Granular Cost Attribution in Bedrock

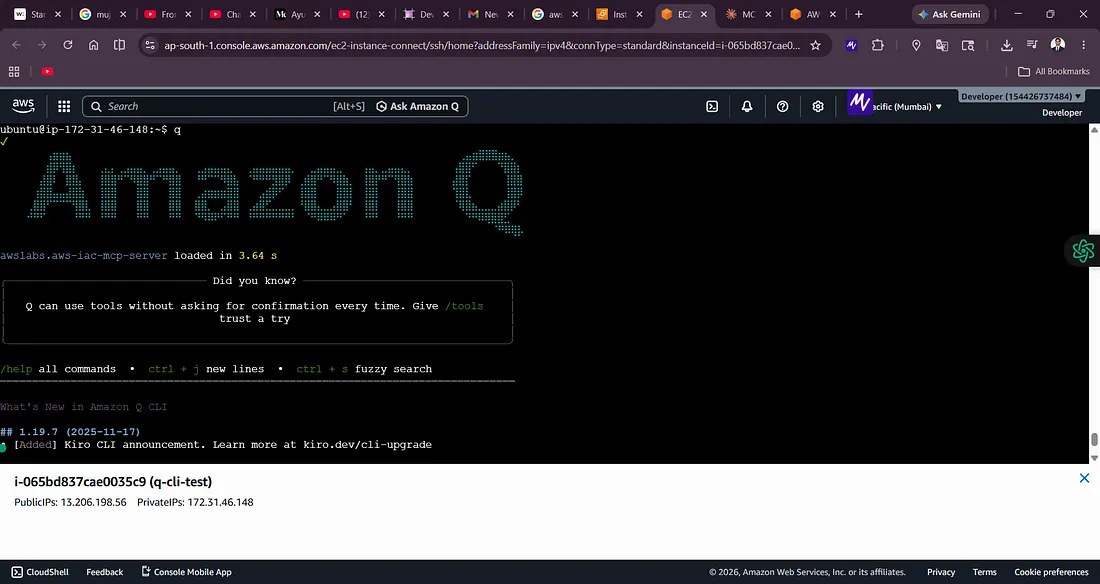

Building AWS Architecture Labs with Amazon Q CLI: From Zero Setup to VPC Peering Infrastructure