GPT-5.4 vs Claude Opus 4.6 vs Gemini 3.1 Pro vs Grok 4.2, Only One Deserves Your Stack

The Uncomfortable Truth About “Best” in 2026

There is no best model in 2026. There is only the best model for the job sitting in front of you right now, and the gap between getting that choice right and getting it wrong has never been wider.

GPT-5.4 Thinking, Claude Opus 4.6, Gemini 3.1 Pro, and Grok 4.2 Beta all wear the “top tier” label. They all promise deep reasoning, agentic workflows, and massive context windows. But spend any real time with them and the differences become obvious, sometimes dramatically so. Each model reflects a distinct philosophy about what professional work should look like, and those philosophies have practical consequences for your stack, your budget, and your output quality.

I have pulled apart all four across six key dimensions. Here is what actually matters.

Core Positioning, Or What Each Model Thinks It Is

GPT-5.4 Thinking

OpenAI built this model for complex professional work. Research, coding, spreadsheets, multi-step tool use, and financial analysis. It is the default “Thinking” option in ChatGPT for Plus, Business, and Pro subscribers, while GPT-5.3 Instant handles lighter everyday tasks.

The emphasis is on controllable reasoning behavior and long-running agent workflows. OpenAI wants GPT-5.4 to be the model you reach for when the task is genuinely hard, not when you need a quick answer.

Claude Opus 4.6

Anthropic’s most advanced Opus variant takes a different angle. It is optimized for sustained, multi-hour reasoning sessions, system-level tool orchestration, and team-style agent collaboration inside Claude Code. Think of it as the model for developers who need to hand off a complex coding session and come back hours later with meaningful progress.

The positioning here is endurance and precision over long arcs, not just single-prompt depth.

Gemini 3.1 Pro

Google DeepMind’s entry leans hardest into native multimodal support. Text, image, audio, video, and PDFs all flow through a single model with explicit thinking modes. It is aimed at enterprise and developer workflows requiring reliable long-context processing, multimodal grounding, and structured data analysis.

If your work lives inside the Google ecosystem, Gemini 3.1 Pro slots in with minimal friction.

Grok 4.2 Beta

xAI’s experimental model is the wild card. Still in public beta, Grok 4.2 is focused on rapid learning, multi-agent collaboration, and tool-heavy workflows within the X ecosystem. Unlike the others, it is designed to evolve behaviorally over time via real-world feedback, rather than shipping as a static release.

That is either exciting or terrifying, depending on how much you value stability.

Context Length and Memory, Where the Real Work Happens

Context length is not a vanity metric. It determines whether you can feed the model your entire codebase, your full legal document set, or six months of conversation history without losing coherence halfway through.

- GPT-5.4 Thinking supports up to 1 million tokens in certain Codex and developer platform contexts, with improved memory retention across multi-step workflows.

- Claude Opus 4.6 introduces a 1 million token context window in beta, with built-in compaction that summarizes earlier material without losing the thread. Anthropic reports 76% retrieval accuracy on 1M-token benchmarks, compared to 18.5% in earlier Opus releases. That jump is staggering.

- Gemini 3.1 Pro markets an “ultra-large” context window without disclosing the exact token count, paired with adaptive reasoning levels that trade latency for depth.

- Grok 4.2 Beta does not publicly quantify its context window at all. Its emphasis is on multi-agent collaboration and continuous learning rather than raw context claims.

My honest read: if long-context reliability is your top priority, Claude Opus 4.6’s retrieval accuracy numbers are the most concrete evidence any vendor has published. GPT-5.4’s million-token claim is real but documented primarily for the developer platform and Codex, not broadly for ChatGPT usage. Gemini’s vagueness here is frustrating. Grok’s silence is telling.

Reasoning Controls, How Much Thinking Do You Want

All four models now offer some form of controllable reasoning depth. This is the feature that separates professional-grade tools from chatbots.

GPT-5.4 Thinking

Four thinking-time tiers: Light, Standard, Extended, and Heavy. You can interrupt the model mid-reasoning and redirect it before it finishes. That interruptible thinking is a standout feature, genuinely useful when you realize two minutes in that you framed the question wrong.

Claude Opus 4.6

Adaptive thinking mode with four effort levels, modulating how much internal deliberation happens per request. Claude shows stronger initiative in multi-step planning and self-review, especially in long-running code and agent workflows. It catches its own mistakes more reliably than previous Opus versions.

Gemini 3.1 Pro

Three thinking levels: Low, Medium, and High. Clean, straightforward, optimized for logic-based problem solving and algorithmic execution. Less nuanced than the four-tier systems, but stable and predictable.

Grok 4.2 Beta

Multi-agent collaboration where several agents contribute to a final answer. The “rapid learning” architecture means the model’s reasoning behavior can shift over time as it incorporates real-world feedback. This is philosophically different from the others, less about user-controlled depth and more about emergent accuracy through collaboration.

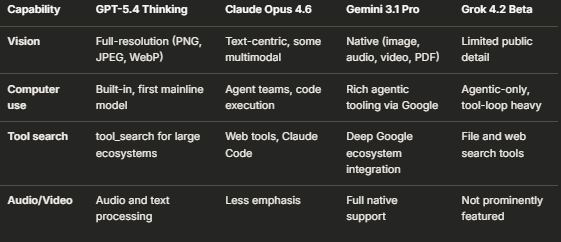

Multimodal and Tool Capabilities

This is where the models diverge most sharply.

GPT-5.4 Thinking is the first mainline OpenAI model with built-in computer-use capabilities, letting agents click, type, and navigate software interfaces directly. That is a genuine first and a meaningful competitive edge for anyone building automated desktop workflows.

Gemini 3.1 Pro wins on raw multimodal breadth. If your workflow involves video analysis, audio transcription, and PDF parsing alongside text, nothing else matches its native integration.

Claude Opus 4.6 excels at agent-team orchestration and free code execution in Claude Code. For pure software engineering workflows, its agentic tooling feels more mature than the competition.

Grok 4.2 Beta is built entirely around tool-loop workflows and agentic posture. It does one thing and commits fully to it, which I respect even if the ecosystem is still narrow.

Performance and Reliability in Real Work

Here is where marketing meets reality.

GPT-5.4 Thinking is benchmarked as stronger than GPT-5.2 on hard reasoning, coding, and document-heavy tasks, with fewer errors and false claims. OpenAI highlights its reliability for spreadsheets, polished reports, and multi-source analysis in financial and professional domains.

Claude Opus 4.6 shows improved cost efficiency, with Anthropic reporting 31% savings on some workloads when using adaptive reasoning effort levels. The 76% retrieval accuracy on million-token benchmarks is the most impressive long-context number any vendor has published this cycle.

Gemini 3.1 Pro claims stronger performance on complex tasks involving data synthesis, technical explanations, and code generation. Its mixture-of-experts architecture and thinking-level controls are tuned for stable, high-precision outputs in long-running workflows.

Grok 4.2 Beta’s performance is still in flux. Early signals suggest it outperforms Grok 4.1 on real-world agentic tasks, but it is not yet positioned as a benchmark leader on standard tests. The “rapid learning” nature means today’s performance may not predict next month’s, for better or worse.

Ecosystem and Access, The Lock-in Question

Your choice of model is also a choice of ecosystem, and ecosystems are sticky.

- GPT-5.4 Thinking lives in ChatGPT Plus, Business, and Pro tiers, plus the OpenAI developer platform and Codex. Tightly coupled with OpenAI’s tool ecosystem.

- Claude Opus 4.6 is available in Claude Pro and via the Claude developer platform, with strong presence in Claude Code for coding workflows. Anthropic’s agentic tooling and web-search integration are the draw.

- Gemini 3.1 Pro is offered through Google’s Gemini Pro tier and developer tools, with deep ties to Google Workspace, Cloud, and DeepMind tooling. If you already live in Google’s world, this is the path of least resistance.

- Grok 4.2 Beta is accessible primarily to X Premium-tier users through an experimental model picker. The ecosystem is tightly bound to xAI’s own tools and the X platform, which limits its reach but deepens its integration for X-native workflows.

So, Which One Do You Actually Pick

Let me be direct with my recommendations, knowing that nuance matters.

If you build multi-step agent systems or need computer-use capabilities, GPT-5.4 Thinking is the strongest choice right now. Built-in computer use, tool search for large ecosystems, and interruptible reasoning give it the most complete agentic toolkit.

If you write code for a living and need sustained, multi-hour sessions, Claude Opus 4.6’s endurance, self-correction, and agent-team collaboration in Claude Code are unmatched. The cost efficiency improvements and retrieval accuracy on long contexts make it the best value for coding-heavy work.

If your work is natively multimodal, involving video, audio, images, and PDFs alongside text, Gemini 3.1 Pro is the only model that handles all of those modalities as first-class inputs. The Google ecosystem integration is a bonus or a trap, depending on your existing stack.

If you want to experiment with emergent, rapidly evolving model behavior, Grok 4.2 Beta is fascinating, but I would not build production systems on it yet. The beta status and behavioral flux mean you are trading stability for novelty.

There is no universal winner. There is only the right fit for your specific workflow, ecosystem, and tolerance for risk.

Conclusion

The 2026 model landscape is not about one model dominating. It is about four distinct philosophies competing for different slices of professional work. GPT-5.4 Thinking bets on controlled depth and computer use. Claude Opus 4.6 bets on endurance and developer experience. Gemini 3.1 Pro bets on multimodal breadth and Google integration. Grok 4.2 Beta bets on emergence and rapid evolution.

The worst decision you can make is choosing based on brand loyalty or benchmark headlines alone. The best decision is mapping your actual daily workflow, identifying whether you need agentic computer use, sustained coding sessions, multimodal inputs, or experimental flexibility, and picking the model that matches.

Start by running your hardest real-world task through two of these models this week. Not a toy demo, not a benchmark recreation, but the messy, multi-step problem that actually costs you hours. The difference in output quality will make the choice obvious.

FAQ

Which model has the largest confirmed context window? Both GPT-5.4 Thinking and Claude Opus 4.6 claim 1 million tokens. Claude Opus 4.6 publishes concrete retrieval accuracy data (76% on 1M-token benchmarks), which gives more confidence in its long-context reliability. Gemini 3.1 Pro says “ultra-large” without disclosing the exact number.

Is Grok 4.2 Beta ready for production use? Not yet. It is still in public beta, its behavior can shift as it incorporates real-world feedback, and public performance data is limited. It is interesting for experimentation, but I would not build critical workflows on it today.

Which model is best for coding? Claude Opus 4.6 has the strongest coding-specific features, including agent-team collaboration in Claude Code, free code execution, and strong self-correction in long sessions. GPT-5.4 Thinking is a close second, especially for multi-step agent workflows that include non-coding tools.

Can GPT-5.4 Thinking actually control my computer? Yes. It is the first mainline OpenAI model with built-in computer-use capabilities, meaning agents can write Playwright code, read screenshots, and perform keyboard and mouse actions. No other model in this comparison offers this as a native, first-party feature.

Which model works best if I am already in the Google ecosystem? Gemini 3.1 Pro integrates deeply with Google Workspace, Cloud, and DeepMind tooling. If your files, email, and infrastructure already live in Google’s world, Gemini offers the least friction.

Do all four models offer controllable reasoning depth? Yes, but the implementations differ. GPT-5.4 offers four thinking-time tiers with mid-reasoning interruption. Claude Opus 4.6 offers four effort levels. Gemini 3.1 Pro offers three thinking levels. Grok 4.2 uses multi-agent collaboration rather than user-controlled tiers.

Which model is most cost-efficient? Anthropic reports 31% cost savings on some Claude Opus 4.6 workloads when using adaptive effort levels. No comparable efficiency data is published for the other models in this comparison, so Claude currently has the strongest cost-efficiency story.

#GPT54Thinking #ClaudeOpus46 #Gemini31Pro #Grok42Beta #ModelComparison2026 #AgenticWorkflows #LongContextModels #DevToolsCompared #ReasoningModels #TechStackDecisions

- GPT-5.4 vs Claude Opus 4.6 comparison 2026

- Gemini 3.1 Pro multimodal context window

- Grok 4.2 Beta agentic workflows review

- best reasoning model for coding 2026

- GPT-5.4 built-in computer use capabilities

- Claude Opus 4.6 million token retrieval accuracy

- controllable reasoning depth model comparison

- GPT-5.4 Thinking vs Gemini 3.1 Pro enterprise

- Grok 4.2 rapid learning model behavior

- top tier models for professional work 2026

References

- https://openai.com/index/gpt-5-4-thinking-system-card/

- https://help.openai.com/en/articles/11909943-gpt-53-and-gpt-54-in-chatgpt

- https://developers.openai.com/api/docs/guides/latest-model/

- https://www.zdnet.com/article/openai-gpt-5-4/

- https://www.tomsguide.com/ai/gpt-5-4-is-here-and-openai-just-made-every-other-ai-model-look-slow

- https://opentools.ai/news/openai-unveils-gpt-54-the-ai-thinking-powerhouse-is-here

- https://www.linkedin.com/posts/openai-for-business_today-we-launched-gpt-54-our-newest-frontier-activity-7435387735449792512-7lwV

- https://magicshot.ai/news/claude-opus-4-6-guide/

- https://ssntpl.com/blog-whats-new-claude-opus-4-6-full-feature-breakdown/

- https://platform.claude.com/docs/en/about-claude/models/whats-new-claude-4-6

- https://apxml.com/models/gemini-31-pro

- https://blog.google/innovation-and-ai/models-and-research/gemini-models/gemini-3-1-pro/

- https://deepmind.google/models/gemini/pro/

- https://www.vibingwithgrok.com/grok-4-2

- https://www.datastudios.org/post/grok-4-2-status-public-beta-signals-agentic-tooling-model-picker-reality-and-what-is-technically

POSTS ACROSS THE NETWORK

9 Best ITGC Tools for SOX Compliance in 2026

How I Choose Between GPT, Claude, Gemini and Open Source Models for Every Task

The $40,000 Mistake Nobody Talks About: How Most Companies Are Using AI Wrong

I Went to Bed While Claude Was Still Working. I Woke Up to a Fixed App.