AI Manipulation and Mind Control: How Algorithms Are Quietly Shaping Human Thoughts, Decisions, and Behavior in 2026

Most people think they’re making independent decisions online. They believe the videos they watch, the products they buy, and even the opinions they form are entirely their own. But in 2026, a growing number of experts are raising uncomfortable questions about how much influence artificial intelligence and algorithms actually have over human behavior. The reality is that modern AI systems don’t just respond to people anymore—they actively shape what people see, feel, believe, and sometimes even desire. From social media feeds to recommendation engines and personalized advertising, algorithms are quietly guiding attention at a scale humanity has never experienced before. This isn’t science fiction mind control with brain chips and hypnotic screens. It’s something more subtle—and arguably more powerful. It’s the gradual shaping of behavior through data, prediction, and emotional influence.

The Attention Economy Has Become an AI Battlefield The internet used to be relatively simple. People searched for information, visited websites, and made decisions more directly. Today, AI-driven platforms decide what appears in front of users every second. Social media feeds, video recommendations, shopping suggestions, and even news content are filtered by algorithms designed to maximize engagement. And engagement often means keeping people emotionally reactive, curious, or addicted to scrolling. Why? Because attention equals profit. The longer users stay online, the more ads they see, the more data platforms collect, and the more predictable their behavior becomes. AI systems are exceptionally good at learning:

- What captures your attention

- What triggers emotional reactions

- What keeps you engaged longest

- What influences your buying decisions

- Over time, these systems become increasingly personalized, almost like invisible psychological mirrors.

How Algorithms Learn Human Behavior Modern AI doesn’t “understand” humans emotionally the way people do, but it recognizes patterns with astonishing accuracy. Every click, pause, like, search, and swipe feeds data into systems that build behavioral profiles. AI can often predict:

- What content you’ll engage with

- What products you might buy

- Which political or social messages resonate with you

- When you’re emotionally vulnerable

The scary part isn’t just prediction—it’s influence. If an algorithm knows what emotionally affects you, it can prioritize content that nudges your behavior in subtle ways. You may think you’re freely choosing what to consume, but much of what you see was strategically selected to maximize your reaction.

Emotional Manipulation Through AI One of the most controversial aspects of AI-driven platforms is emotional targeting. Algorithms tend to prioritize emotionally intense content because strong emotions increase engagement. Fear, outrage, anxiety, and excitement often spread faster than calm, balanced information. This creates an environment where users are constantly pushed toward emotionally charged material without realizing it. For example:

- Angry content keeps users commenting

- Fear-based headlines increase clicks

- Controversial opinions drive debates

- Personalized recommendations deepen emotional attachment

Over time, this can shape how people think, what they believe, and even how they perceive reality.

The Personalization Trap At first, personalization feels convenient. Your apps seem to “know” you. Recommendations become more accurate, content feels more relevant, and digital experiences become smoother. But personalization also creates information bubbles. AI systems often show users more of what they already agree with or emotionally respond to. This can reinforce beliefs, intensify biases, and reduce exposure to different perspectives. Imagine two people searching the same topic but receiving entirely different streams of information based on their behavior history. In many ways, people are now experiencing completely personalized versions of reality online. That level of influence was impossible before AI.

AI and Consumer Behavior AI isn’t just shaping opinions—it’s reshaping spending habits. E-commerce platforms use algorithms to study:

- Purchasing patterns

- Browsing behavior

- Emotional triggers

- Timing and urgency responses

Ever noticed how products seem to appear right when you’re thinking about them? It’s often not coincidence. Predictive AI models are designed to anticipate consumer interest before users consciously decide to buy. This creates highly optimized systems built around psychological influence.

Can AI Influence Elections and Society? This is one of the most debated issues surrounding artificial intelligence today. AI-driven recommendation systems have enormous power over information distribution. If algorithms prioritize certain narratives, suppress others, or amplify emotional division, they can influence public opinion at scale. Even without intentional manipulation, systems optimized purely for engagement may unintentionally favor sensationalism over accuracy. The concern isn’t necessarily that AI is “evil.” The concern is that profit-driven algorithms may shape societies in ways nobody fully controls.

Are Humans Losing Free Will? This is where the conversation becomes philosophical. Are people truly being controlled—or simply influenced more efficiently than ever before? Humans have always been influenced by advertising, media, and social pressure. AI simply makes that process faster, smarter, and more personalized. The danger comes when people stop recognizing the influence altogether. If algorithms continuously shape what you see, what you think about, and what emotionally affects you, your decisions may feel independent while being heavily guided behind the scenes.

The Importance of Digital Awareness The solution isn’t abandoning technology altogether. AI is deeply integrated into modern life, and many systems provide real value. The key is awareness. People need to become more conscious of:

- Why certain content appears

- How algorithms influence emotions

- How much time they spend consuming curated information

- Whether they’re actively thinking or passively reacting

Digital literacy is becoming just as important as traditional literacy.

Conclusion AI manipulation doesn’t look like the dystopian mind control people once imagined. It’s quieter, more personalized, and woven into everyday life. Algorithms are shaping attention, influencing emotions, and guiding decisions in ways most users barely notice. The real issue isn’t whether AI can influence human behavior—it already does. The deeper question is how much influence society is willing to accept before convenience begins to outweigh autonomy. In 2026, the battle for control may not happen through force or censorship. It may happen through algorithms quietly deciding what billions of people see, feel, and believe every single day.

POSTS ACROSS THE NETWORK

The Rise of AI in Web3 UX: How AI Is Changing Product Design in 2026

10 AI Tools Content Writers Are Quietly Using in 2026 (Beyond ChatGPT)

How AI Agents Are Changing the Way Developers and Creators Approach Video Production

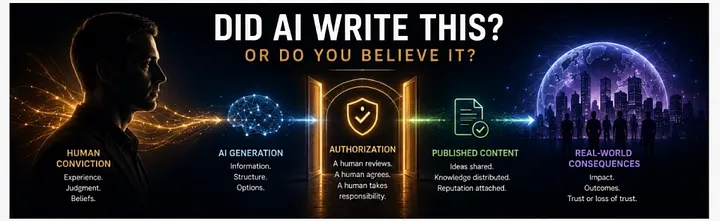

Did AI Write This?