AI Agents Are Attacking Crypto, Defending It, and Trading It. They Run on the Same Technology.

Six days.

That’s all it took for AI agents to steal $285 million from a Solana protocol, launch an intelligence platform to catch crypto criminals, and quietly manage live trading portfolios. All on the same blockchain infrastructure. All in the same week.

If that doesn’t make you uncomfortable, you’re not paying attention.

The attack side is winning

On April 1st, Drift Protocol, the largest decentralized perpetual futures exchange on Solana, lost $285 million in 12 minutes. TRM Labs attributed the attack to North Korean state-sponsored hackers who had spent six months socially engineering their way past the protocol’s security. The exploit mechanism wasn’t a smart contract flaw. It was compromised admin keys and a disabled timelock, the kind of attack that no audit would have caught.

Four days later, Ledger’s CTO Charles Guillemet went on record with a warning that should concern anyone in this space: AI has made crypto phishing attacks “nearly free to produce and impossible to spot.” Deepfake video calls that impersonate CEOs. Emails without a single grammatical error or formatting tell. Voice clones that pass verification. The red flags humans relied on for a decade have been automated away.

The numbers behind his warning are staggering. Chainalysis reported $3.4 billion in crypto theft for 2025 alone. Experian’s 2026 fraud forecast flagged AI-powered phishing and cloned websites as top emerging threats. Deepfake-related fraud attempts have surged over 2,000% in three years, according to digital identity firm Signicat.

And late last year, Anthropic’s research team demonstrated something even more unsettling. In a controlled study, AI models successfully exploited 207 out of 405 real smart contracts, finding vulnerabilities that had been exploited between 2020 and 2025. The average cost per attack? $1.22 in API fees.

The tools to break crypto are getting cheaper. The people building defenses are scrambling to keep up.

The defense side is mobilizing

One week before the Drift hack, Chainalysis announced what they called “the biggest platform shift in the company’s history.” At their annual Links conference in New York on March 31st, they unveiled blockchain intelligence agents, AI systems trained on billions of screened transactions and over ten million investigations spanning more than a decade.

These aren’t chatbots bolted onto a dashboard. Chainalysis designed them around four principles: data quality over speed, full audit trails, deterministic workflows for critical decisions, and humans always in control of what gets automated. The agents will begin rolling out over the summer, starting with investigations and compliance.

Six days earlier, TRM Labs, a blockchain analytics firm now valued at $1 billion after a funding round backed by Goldman Sachs and Citi Ventures, launched its own AI investigative tool: Co-Case Agent. Built on what TRM calls a “glass-box philosophy,” it traces fund flows, audits transaction graphs, and suggests next steps while keeping every recommendation traceable to the underlying data. Available at no extra cost to all TRM Forensics customers, including law enforcement agencies and financial regulators worldwide.

Two of the most consequential blockchain intelligence companies deploying AI agents within a week of each other. Both emphasizing the same thing: transparency, audit trails, explainability.

But here’s the catch. The defense is rolling out “over the summer.” The attacks are happening now.

The third side nobody’s watching

While AI agents were stealing and defending, a quieter wave was building. In the first twelve weeks of 2026, at least seven major platforms launched or announced AI-powered crypto trading tools: Binance, 3Commas, MoonPay, Bybit, Nansen, Trust Wallet, and MoneyFlare. That’s not counting smaller launches.

Most promise autonomous trading, portfolio management, or market analysis. Very few explain how they make decisions. 3Commas charges $5,000 for VIP early access to a backtesting tool. Binance added 13 AI skills with no trade reasoning visible. MoneyFlare promises “fully automated” with zero documentation of how.

The same opacity problem that makes phishing effective makes it nearly impossible to evaluate whether an AI trading agent is acting in your interest or against it. When the systems doing good and the systems doing harm are built on identical technology, the question stops being “should I use AI in crypto?” and becomes something harder.

How do you tell them apart?

Three questions that separate trust from marketing

After watching this space for months, testing AI trading tools, and tracking what the defense companies are building differently from the attackers, the pattern becomes clear. Trustworthy AI agents in crypto share three traits. Untrustworthy ones are missing at least one.

Can you see the reasoning?

Chainalysis built their agents to produce full audit trails. TRM Labs designed Co-Case Agent on a “glass-box philosophy” where every recommendation is traceable. Both companies arrived at the same conclusion independently: if you can’t see why an AI made a decision, you can’t trust the decision.

This applies to trading too. An AI that buys a token should be able to tell you why before it buys it. Not after. Not in a summary. Before.

Does it touch your keys?

The Drift hack drained $285 million from users who had deposited funds into the protocol’s vaults. The vulnerability wasn’t the smart contract. It was the custody architecture. Funds sitting inside someone else’s system are subject to someone else’s security failures.

Non-custodial systems, where your keys never leave your wallet, remove this entire category of risk. It doesn’t matter if a protocol gets hacked if your assets never touched the protocol’s custody layer.

Can you turn it off?

Chainalysis agents let humans decide “what can be automated and at what level of independence.” TRM’s Co-Case Agent “suggests, explains, and documents, but does not silently rewrite graphs.” Both preserve human override at every step.

An AI trading agent that can’t be paused, that executes without explaining, that makes decisions you can’t review, fails this test. Whether it’s managing investigations or managing your portfolio, the kill switch matters.

What this looks like in practice

I’ve been running an experiment since January 16th. Two SOL (roughly $300 at the time) allocated to a preset community strategy called Value Investor on a platform called andmilo.com, managed by an AI portfolio manager named milo. AutoTrade on. No manual intervention.

I’m sharing this not as a recommendation, but as one example of what answering those three questions looks like.

Before every trade, milo writes a thesis with setup, risk assessment, key levels, and confidence score. This one: KMNO, 12x risk-to-reward, 65% confidence.

Every trade starts with a written thesis. The screenshot above shows a real one: a position in KMNO with a detailed setup, risk assessment, key levels marked, 12x risk-to-reward ratio, 65% confidence, and a note that “confidence is moderate due to weak broader market momentum.” The reasoning is written before the entry, not after.

milo’s daily digest from April 7, 2026: $142.58 portfolio, 15 active positions (8 winning, 7 losing), 147 closed trades, 79 potential entries skipped yesterday.

This morning’s daily recap: portfolio at $142.58 (down from roughly $300, and I’m not going to spin that). 15 active positions, 8 winning, 7 losing. 147 trades closed over 82 days. Yesterday alone, milo evaluated 79 potential entries and skipped all of them due to insufficient capital. Executed 3 trades, 2 take-profits, 0 stop-losses.

Non-custodial. Funds never leave my wallet. Pause button always available. Every decision logged.

Does it pass all three questions? Yes. Has it made me money? Not yet. But I can see exactly why every decision was made, and I can pull the plug at any moment. That’s more than I can say for most of the platforms that launched this quarter.

The war is already here

The crypto Fear & Greed Index has been stuck in Extreme Fear for over a month. $3.4 billion stolen last year. AI making attacks cheaper by the day. And yet AI agents are simultaneously becoming the most promising defense mechanism and the most practical tool for navigating volatile markets.

This isn’t a future scenario. It’s happening right now, across the same blockchains, using the same underlying technology.

The question for 2026 isn’t whether AI agents will reshape crypto. They already have. It’s whether you can tell the ones working for you from the ones working against you.

Start with those three questions. If an AI agent can’t answer all of them, walk away.

POSTS ACROSS THE NETWORK

Why Tech Projects Fail and How Embedded Systems Experts Can Prevent It

InnoDB Performance Tuning – 11 Critical InnoDB Variables to Optimize Your MySQL Database

Fun Word Memory Techniques for English to Try

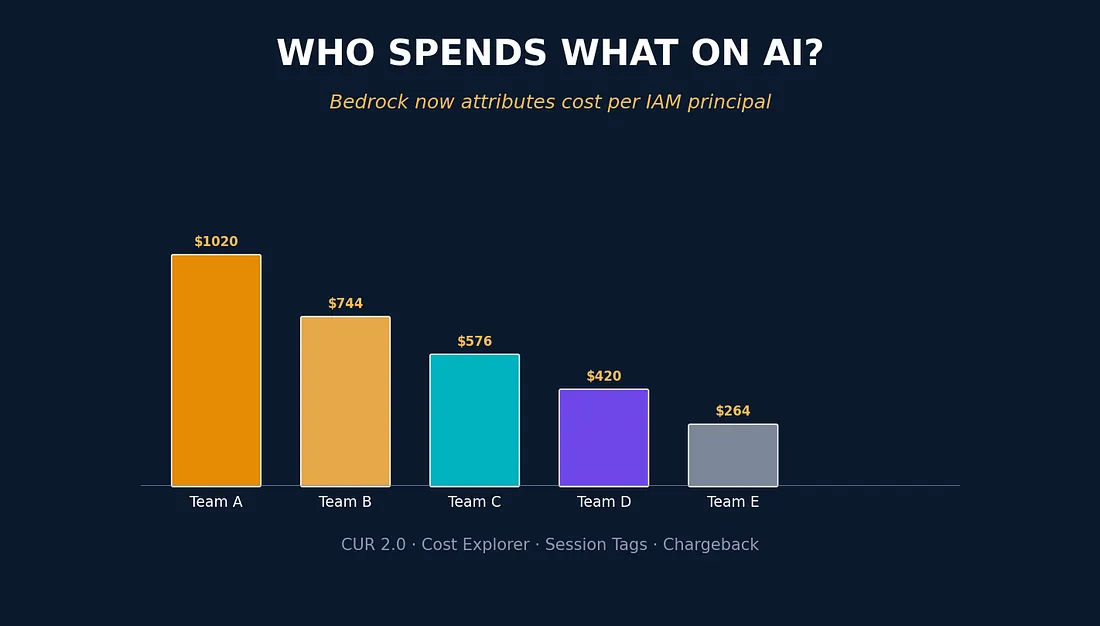

FinOps Meets IAM: How AWS Finally Delivered Granular Cost Attribution in Bedrock

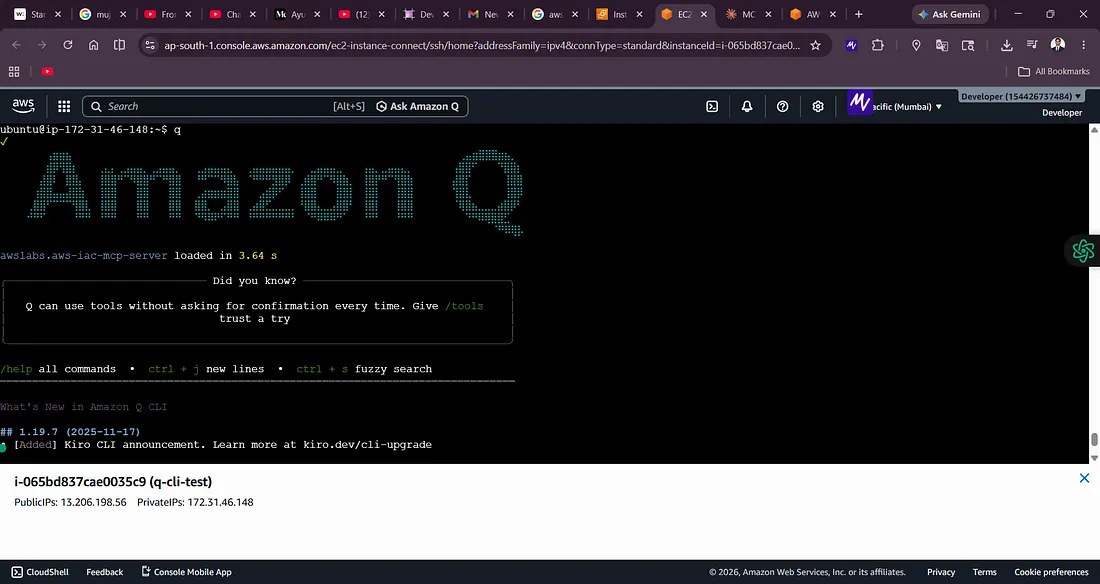

Building AWS Architecture Labs with Amazon Q CLI: From Zero Setup to VPC Peering Infrastructure