High quality very long text summarization with powerful 7B LLM's on high grade consumer GPU

Introduction

In the fast-evolving landscape of natural language processing, the ability to efficiently summarize extensive texts has become increasingly vital. Today, I am excited to share a breakthrough approach that simplifies this complex task, leveraging the power of Large Language Models (LLMs) in a unique and efficient manner.

As a data scientist working in the realm of AI and biomathematical models, I constantly seek ways to harness cutting-edge technology for practical and impactful solutions. This journey led me to explore the capabilities of a 7-billion parameter LLM, specifically optimized for local GPU deployment with AWQ 4-bit quantization, making it not only powerful but also accessible for a wide range of applications.

In this post, I will unveil an straightforward method to perform extensive text summarization in English (but also supported for multilenguaje), highlighting its simplicity and effectiveness. This method is not just a testament to the advancements in machine learning models but also to the art of prompt engineering and resource-efficient computing.

So, let’s dive into the world of AI-driven text summarization, where complexity meets simplicity, and efficiency becomes the key.

Hands-On with simplified but powerful text summarization using LLMs

The core concepts

In the realm of text summarization, the main challenge lies in processing large volumes of data without sacrificing the quality and contextual relevance of the output. The solution I propose hinges on the utilization of a Large Language Model (LLM) — specifically, a 7-billion parameter model known as OpenHermes-2.5-neural-chat-7B-v3–2–7B (quant AWQ version by TheBloke). This model stands out due to its impressive performance on various language generation benchmarks, even surpassing models with up to 70 and 30 billion parameters.

The approach

The basics of this method is its simplicity and efficiency. By leveraging the model’s capabilities, combined with intelligent text splitting and recursive summarization, we can handle extensive documents with remarkable ease. Here’s how it unfolds:

- Model and tokenizer initialization: We begin by initializing the model and tokenizer, ensuring they are optimized for local GPU execution. This step is crucial for handling the extensive computational requirements of LLMs.

- Recursive summarization function: At the heart of this process is a custom function,

recursive_summarization, designed to split the document into manageable chunks. Each chunk is then summarized independently. If the document is split into multiple parts, these summaries are combined and summarized again, recursively, ensuring a comprehensive and coherent final summary. - Prompt engineering: A key aspect of this method is the carefully crafted prompt that guides the model to produce summaries in English (and spanish). This prompt engineering is essential for ensuring the summaries are not only accurate but also linguistically sound.

- Parameters for text generation: Fine-tuning the text generation process involves adjusting parameters like sampling, temperature, top_p, and repetition penalty. These parameters play a pivotal role in dictating the style and diversity of the generated text, allowing us to align the output closely with the desired context and quality.

- Document processing: The final step involves processing the documents using

SimpleDirectoryReaderand applying the summarization function. This systematic approach ensures each document is treated with the same level of precision and care.

The model’s 4-bit quantization format is a game-changer, making it viable for use on local GPUs (with 24GB VRAM).

Although the code focuses mainly on the summary in English, I also tested it on Spanish texts with a Spanish prompt engineer with results of the same quality, this shows the versatility of LLMs in handling different languages with a high command.

Let's code 🚀

Environment setup

Before we embark on the text summarization part, it’s essential to set up our environment. This involves importing the necessary libraries and ensuring they are installed in your Python environment.

Here’s what you need to install (if you don´t already installed ):

pip install transformers tqdm llama_index

git clone https://github.com/casper-hansen/AutoAWQ

cd AutoAWQ

pip install -e .

Now we start by importing the required libraries from transformers, tqdm, and llama_index. These libraries provide us with the tools for model handling, progress tracking, and text processing.

from transformers import AutoTokenizer, AutoModelForCausalLM, AwqConfig

from tqdm.notebook import tqdm

from llama_index.text_splitter import TokenTextSplitter

from llama_index import Document

from llama_index.schema import Node

from llama_index import SimpleDirectoryReader

We begin by loading up our model and tokenizer. This step is crucial for preparing our model to process and generate text.

# Initialize the model and tokenizer from huggingface repo

model_name_or_path = "TheBloke/OpenHermes-2.5-neural-chat-7B-v3-2-7B-AWQ"

q_conf = AwqConfig(fuse_max_seq_len=1024, do_fuse=True)

model = AutoModelForCausalLM.from_pretrained(model_name_or_path, config=q_conf, trust_remote_code=True, device_map='cuda:0')

tokenizer = AutoTokenizer.from_pretrained(model_name_or_path, trust_remote_code=True, use_fast=True)

The model is loaded in AWQ quant 4-bits format, more information at https://github.com/mit-han-lab/llm-awq

Now, this is where the magic happens with our custom function recursive_summarization, it will takes a document and breaks it down into smaller chunks. Each chunk is then summarized, and if necessary, the process is repeated for a comprehensive summary.

def recursive_summarization(document, tokenizer, model, generate_kwargs, chunk_size, summary_kind):

"""

Recursively summarize a text using an LLM and NodeParser with TokenTextSplitter.

:param document: Document object with text to summarize.

:param tokenizer: Tokenizer of the LLM model.

:param model: LLM model.

:param generate_kwargs: Arguments for the model's text generation.

:param chunk_size: Maximum token size per chunk.

:return: Final summary of the document.

"""

def recursive_summarization_helper(nodes):

summarized_texts = []

for node in tqdm(nodes):

prompt = f"""### System:

You are an expert agent in information extraction and summarization.

### User:

Read the following context document:

---------------

{node.get_content()}

---------------

Your tasks are as follows:

1.- Write an extensive, fluid, and continuous paragraph summarizing the most important aspects of the information you have read.

2.- You can only synthesize your response using exclusively the information from the context document.

### Assistant:

According to the context information, the summary in English is: """

inputs = tokenizer(prompt, return_tensors='pt').to("cuda")

tokens = model.generate(**inputs, **generate_kwargs)

# Decode the response

completion_tokens = tokens[0][inputs["input_ids"].size(1):]

response_text = tokenizer.decode(completion_tokens, skip_special_tokens=True)

summarized_texts.append(response_text)

if len(nodes) == 1:

return summarized_texts[0]

else:

combined_text = ' '.join(summarized_texts).strip().strip('\n')

new_nodes = node_parser.get_nodes_from_documents([Document(text=combined_text)])

return recursive_summarization_helper(new_nodes)

node_parser = TokenTextSplitter(chunk_size=chunk_size, chunk_overlap=0)

initial_nodes = node_parser.get_nodes_from_documents([document])

return recursive_summarization_helper(initial_nodes)

This code reflects the innovative blend of advanced AI capabilities and user-friendly design, making the complex task of text summarization surprisingly straightforward. With this function, we’re not just processing text; we’re reshaping it into a concise and meaningful form, tailor-made for efficiency and effectiveness.

Results

After delving into the technical aspects of our text summarization approach, it’s vital to see it in action. To demonstrate the efficacy of this method, let’s take a classic piece of literature, “The Great Gatsby” by F. Scott Fitzgerald, and run it through our summarization process.

The process:

- Time and Iterations: The entire process took 1 minute and 57 seconds (on a RTX-4090 24gb VRAM), a testament to the efficiency of the model and our method, especially considering the complexity and length of the text.

- Recursions: The text was processed through 4 recursive iterations. This shows the depth of analysis and the ability of our method to distill extensive information into concise summaries.

The outcome:

Now, let’s examine the output generated by this process.

The story tells the lives of several characters in New York during the early 20th century, focusing on the narrator, Gatsby and even more importantly Daisy. The environment presented is marked by underground tension, relationships that are difficult to decipher, and I will seriously seek joy. They all participate in exposed social parties where highly charged emotions are revealed along with many secrets kept silent. Gatsby, a mysterious but charming guy, wants to win the heart of Daisy, who for him symbolizes perfection in beauty and happiness. His aspiration to achieve happiness and love leads to creating an opulent life in West Egg, Long Island, and fighting against adversaries such as Tom Buchanan, another suitor of Daisy. As the plot evolves, we show how everyone is involved in distressing and problematic situations, reflecting humanity and ethics in our behavior. The story shows us the central tragicomedy in Gatsby, passionate about Daisy, married to Tom Buchanan; both experiencing tensions and intense feelings, but unable to shape their relationship due to the control exerted by Tom. Their romantic and work experiences generate conflict and drama including betrayals, resignations, very emotional conversations and fatal endings. I also highlight the continuous search for happiness, commitment and personal self-construction, as well as the difficulty of managing the consequences of our decisions and actions. Likewise, it highlights human complexity and interactions between people, as well as the influence of external factors on our life journey.

This summary demonstrates not just the model’s ability to grasp and condense key themes and narratives, but also its linguistic prowess, particularly in maintaining the essence of the original text in a summarized form.

Hardware requirements

An important aspect to consider when working with this text summarization method is the hardware requirement. Specifically, the efficiency and speed of this process are significantly enhanced when executed on a GPU with at least 24GB of VRAM. This is due to the large model size and the intensive computational demands of processing extensive text data with a Large Language Model.

However, it’s worth noting that there are alternatives for those who may not have access to such high-end GPU resources. Backends like llama-ccp enable the execution of this code on a CPU. While this makes the method more accessible, it's crucial to understand that the trade-off is in processing speed. Running these tasks on a CPU will result in a much slower performance compared to a powerful GPU setup.

This information is vital for anyone planning to implement this text summarization approach, as it helps in setting realistic expectations regarding the resources required and the consequent processing speed.

Exploring free GPU options: Google colab and Kaggle

In addition to the hardware requirements, it’s encouraging to know that there are accessible alternatives for those without a high-end GPU access, Platforms like Google Colab and Kaggle offer free GPU resources, albeit with less memory compared to the ideal 24GB VRAM setup. While these GPUs may not match the power of more advanced setups, they still provide a viable option for running our text summarization process.

To adapt to the memory constraints of these platforms, one can adjust the chunk_size parameter in the summarization function. It's important to note that changing this parameter will impact the summarization results. A smaller chunk size may require more iterations and contextual and semantic information degradation but can make the process feasible on GPUs with lower memory.

Kaggle, in particular, offers an interesting opportunity with its provision of free access to multiple GPUs, each with around 15GB of VRAM. This multi-GPU environment can be leveraged to distribute the computational load, thus enhancing the feasibility and efficiency of the process.

These platforms not only democratize access to powerful computational resources but also open the door for experimentation and innovation in AI and language processing, even for individuals and organizations without access to high-end hardware.

Conclusion

As we wrap up this exploration into the realm of efficient text summarization using Large Language Models, it’s important to reflect on what we’ve covered. This journey has taken us through the intricacies of a powerful, yet surprisingly simple approach to handling extensive text data. From the initialization of a robust 7-billion parameter model to the intricacies of recursive summarization, we’ve seen how cutting-edge AI can be harnessed in practical, resource-efficient ways.

The blend of advanced AI capabilities, thoughtful prompt engineering, and efficient use of computational resources underscores a crucial point: sophisticated tasks like text summarization don’t always require complex solutions. Sometimes, simplicity, combined with the right tools and techniques, can lead to equally, if not more, effective outcomes.

Platforms like Google Colab and Kaggle further democratize this technology, making it accessible even to those without high-end hardware. This opens up a world of possibilities for individuals and organizations across the globe, allowing them to leverage AI for a myriad of applications, regardless of their hardware limitations.

As we conclude, I hope this post has enlightened you on the potentials and practicalities of AI-driven text summarization. If you found this exploration insightful, I would greatly appreciate your claps and follows. Your support motivates me to continue sharing valuable insights and breakthroughs in the field of AI and data science.

Thank you for joining me on this journey, and I look forward to bringing more innovative and practical AI solutions to your attention. Keep exploring, keep innovating!

POSTS ACROSS THE NETWORK

The Technology Reshaping UK Medical Cannabis Services

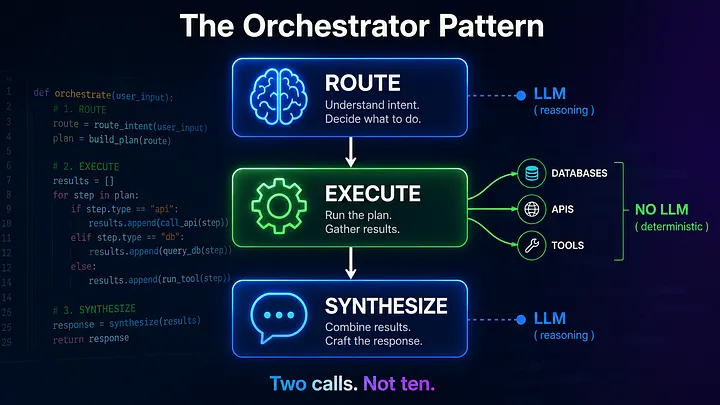

The Compounding Problem in Agentic AI Era

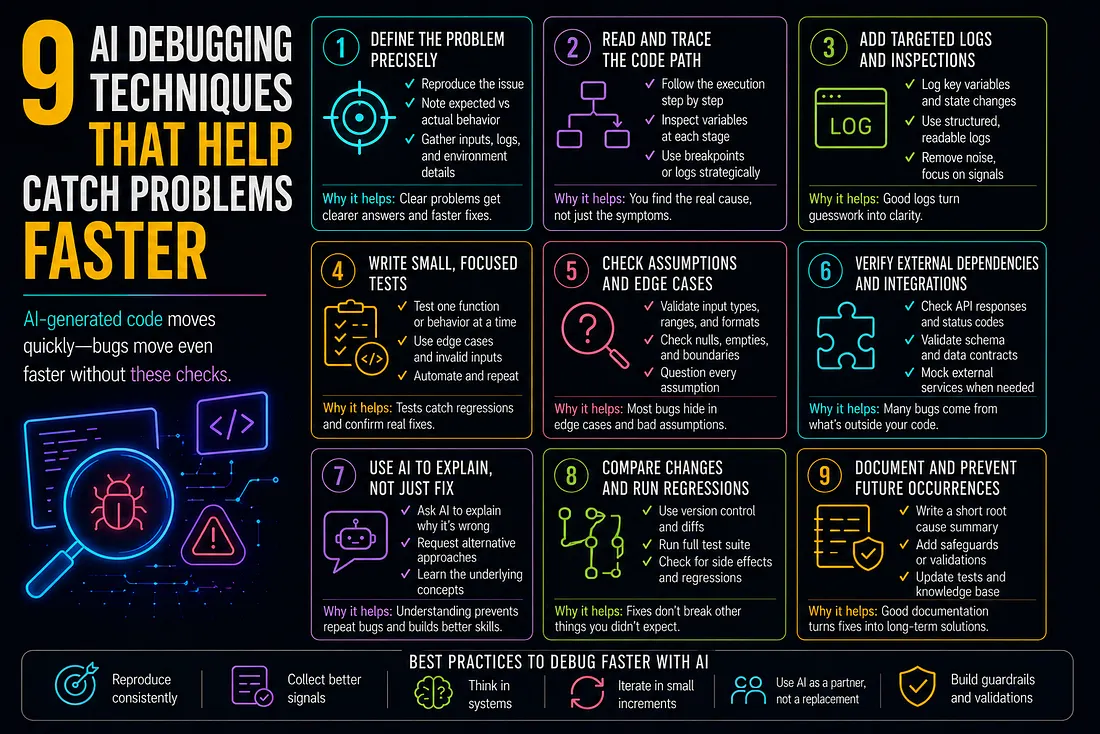

9 AI Debugging Techniques That Help Catch Problems Faster

How to Add SMS to Marketo Smart Campaigns Without Breaking Your Workflow

Best MCP Server for SEO in 2026: Guide for GEO, AEO, and SERM Experts