Bringing multilingual Embeddings and Re-ranking to your local/dev environment with BAAI bge-m3 — 8k

Introduction

In the vast expanse of natural language processing (NLP), finding a robust, multilingual model that can effectively handle both embeddings and re-ranking can be akin to searching for a needle in a haystack. Today, I’m thrilled to share a solution that not only addresses this challenge but also offers a swift, easy-to-deploy server for FAST local development and testing purposes.

The problem

As the digital world continues to shrink, the demand for multilingual NLP solutions has skyrocketed. Yet, the availability of models that can accurately understand and process multiple languages, especially for embeddings and re-ranking tasks in the Open Source world, remains very limited. This gap significantly hinders the development of global, inclusive applications. After testing numerous models, the scarcity of efficient, multilingual options became evident, propelling me to search for a better solution.

The solution

Enter the BAAI’s M3 — 8k context length model, a beacon of hope in the multilingual NLP landscape. The model’s proficiency in understanding and processing a wide array of languages is unparalleled. Recognizing its potential, I’ve developed a simple, fast server using FastAPI to serve embeddings and re-ranking services powered by this model. This server is designed for local development and testing, offering a straightforward way to integrate advanced NLP capabilities into your projects.

How it works

The server leverages FastAPI to expose endpoints for generating embeddings and re-ranking, making it incredibly user-friendly for developers. With asynchronous processing and batch handling, it’s built to manage multiple requests efficiently, ensuring that even in a local development environment, you experience optimal performance.

Despite its simplicity, this server is packed with powerful features designed to maximize efficiency and performance for local development and testing. Here are a few highlights:

- Asynchronous Processing: Utilizing FastAPI’s asynchronous capabilities, the server efficiently handles multiple requests simultaneously, ensuring rapid response times without blocking operations.

- Dynamic Timeout Handling: To prevent long waits and potential server hang-ups, a dynamic timeout mechanism is in place. This ensures that if a request cannot be processed within a specified timeframe, it’s gracefully handled, and an appropriate response is returned to the client.

- Intelligent Batching: The server smartly accumulates incoming requests into batches, optimizing the utilization of the M3 model for embeddings and re-ranking tasks. This batching mechanism significantly improves throughput, especially when dealing with high volumes of requests.

- Flush Mechanism for Batches: A smart flush mechanism ensures that batches are not only processed at regular intervals but also when they reach a certain size. This dual-condition approach guarantees that the server remains responsive and that requests are processed in a timely manner, enhancing the overall efficiency of the model’s utilization.

- GPU Utilization and Semaphore Lock: A semaphore lock ensures that GPU access in a secure manner, preventing concurrent access issues.

These features, combined with the multilingual capabilities of the model, make this server a robust solution for developers looking to integrate advanced NLP functionalities into their applications with ease and a few lines of code.

Repository and final words

All the code, along with detailed instructions for setting up and running the server, is available in this GitHub repository. Whether you’re a seasoned developer or just starting out, you’ll find the repository equipped with everything you need to get started.

Exploring the capabilities of the BAAI’s bge-m3 model has been an enlightening journey, and I’m excited for you to embark on this adventure. The server is a testament to the model’s versatility and effectiveness across languages, and I believe it will be a valuable tool in your NLP toolkit.

Hope you find this server as useful as I have. Stay tuned for more insights into the world of artificial intelligence and machine learning. Your support motivates me to continue exploring and sharing solutions that can make our digital world more inclusive and intelligent.

Happy coding, and let’s break the language barriers together!

DM

POSTS ACROSS THE NETWORK

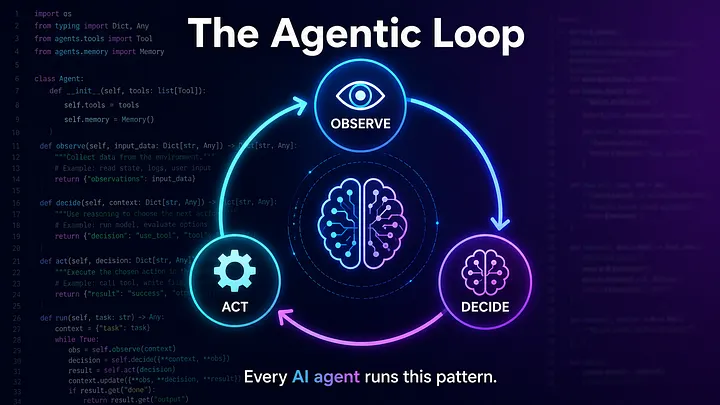

The Compounding Problem in Agentic AI Era

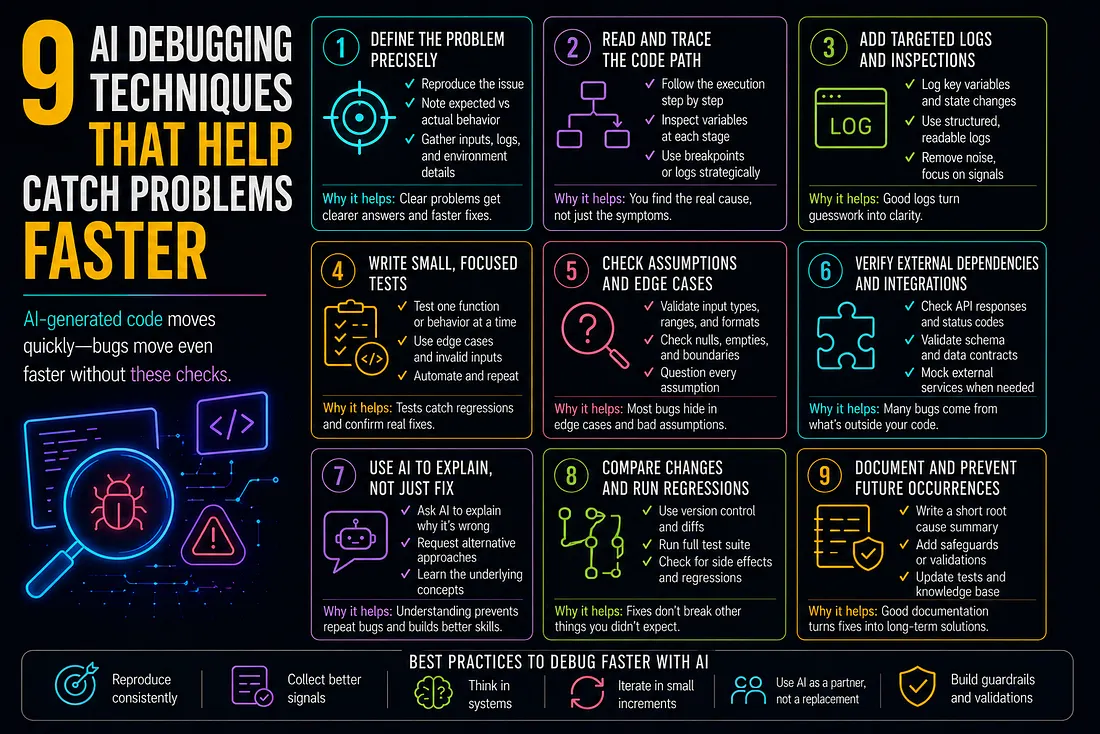

9 AI Debugging Techniques That Help Catch Problems Faster

How to Add SMS to Marketo Smart Campaigns Without Breaking Your Workflow

Best MCP Server for SEO in 2026: Guide for GEO, AEO, and SERM Experts

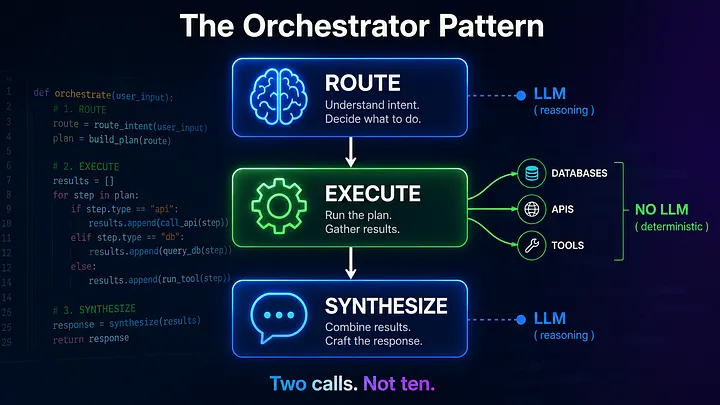

Beyond the Agentic Loop: The Orchestrator Pattern for Multi-Agent Systems